Conferences and Publications

preprint

TL;DR: LLMs can find a needle in a haystack but fail to retrieve the last few items in a short list — we name this the Position Curse, characterize it across open- and closed-source models, and show LoRA fine-tuning on PosBench helps but doesn't saturate.

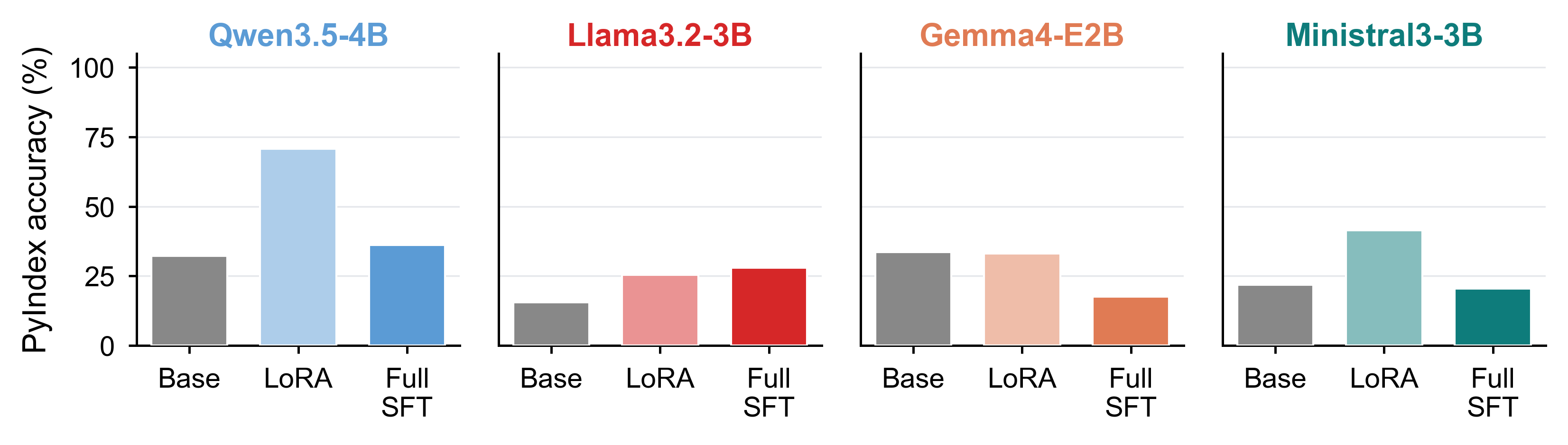

Modern large language models (LLMs) can find a needle in a haystack (locating a single relevant fact buried among hundreds of thousands of irrelevant tokens) with near-saturated accuracy, yet fail to retrieve the last few items in a short list. We call this failure the Position Curse. For instance, even in a two-line code snippet, Claude Opus 4.6 misidentifies the second-to-last line most of the time. To characterize this failure, we evaluated two complementary queries: given a position in a sequence (of letters or words), retrieve the corresponding item; and given an item, return its position. Each position is specified as a forward or backward offset from an anchor, either an endpoint of the list (its start or end) or another item in the list. Across both open-source and frontier closed-source models, backward retrieval substantially lags forward retrieval. To test whether this capability can be rescued by post-training, we constructed PosBench, a position-focused training dataset. LoRA fine-tuning improves both forward and backward retrieval and generalizes to a held-out code-understanding benchmark (PyIndex), yet absolute performance remains far from saturated. As LLM coding agents increasingly operate over large codebases where precise indexing becomes essential for code understanding and editing, position-based retrieval emerges as a key capability for future pretraining objectives and model design.

preprint

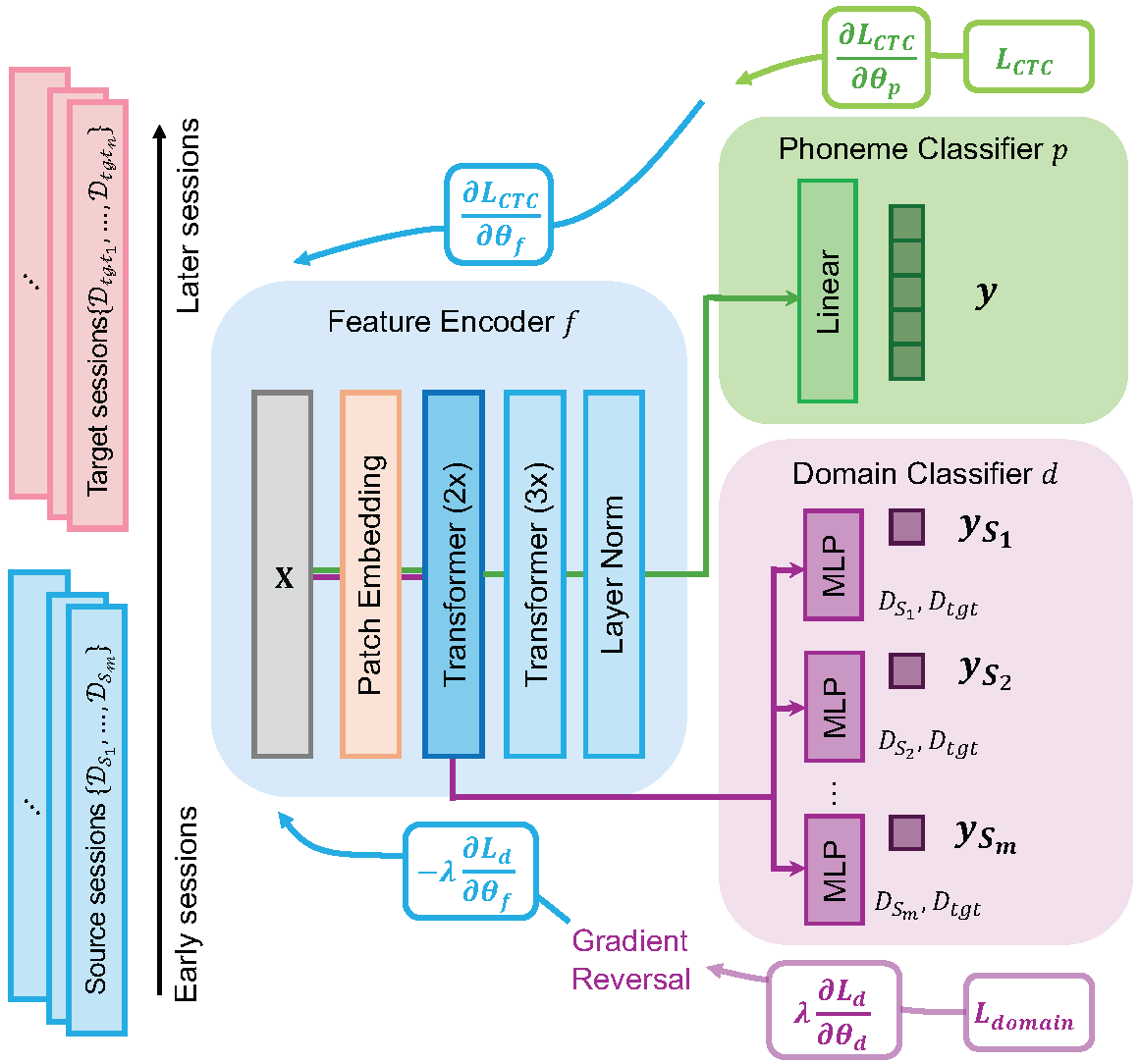

TL;DR: ALIGN is an adversarial domain-alignment framework for intracortical speech BCIs that generalizes to unseen sessions, lowering phoneme and word error rates relative to baselines.

Intracortical brain-computer interfaces (BCIs) can decode speech from neural activity with high accuracy when trained on data pooled across recording sessions. In realistic deployment, however, models must generalize to new sessions without labeled data, and performance often degrades due to cross-session nonstationarities (e.g., electrode shifts, neural turnover, and changes in user strategy). In this paper, we propose ALIGN, a session-invariant learning framework based on multi-domain adversarial neural networks for semi-supervised cross-session adaptation. ALIGN trains a feature encoder jointly with a phoneme classifier and a domain classifier operating on the latent representation. Through adversarial optimization, the encoder is encouraged to preserve task-relevant information while suppressing session-specific cues. We evaluate ALIGN on intracortical speech decoding and find that it generalizes consistently better to previously unseen sessions, improving both phoneme error rate and word error rate relative to baselines. These results indicate that adversarial domain alignment is an effective approach for mitigating session-level distribution shift and enabling robust longitudinal BCI decoding.

Science Advances

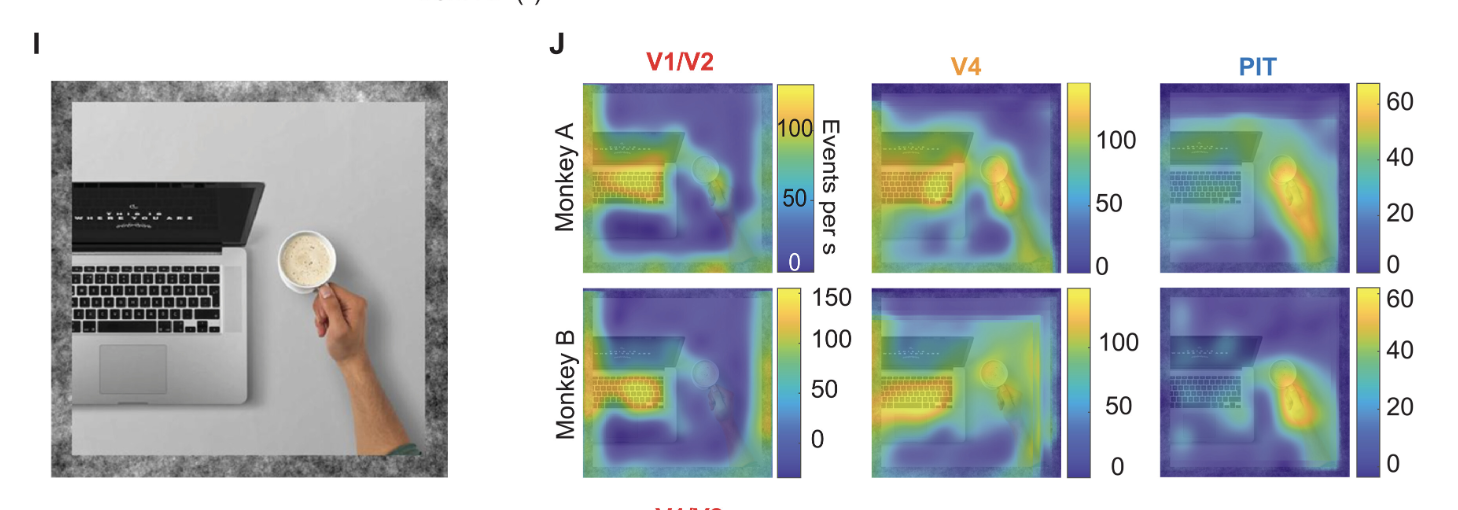

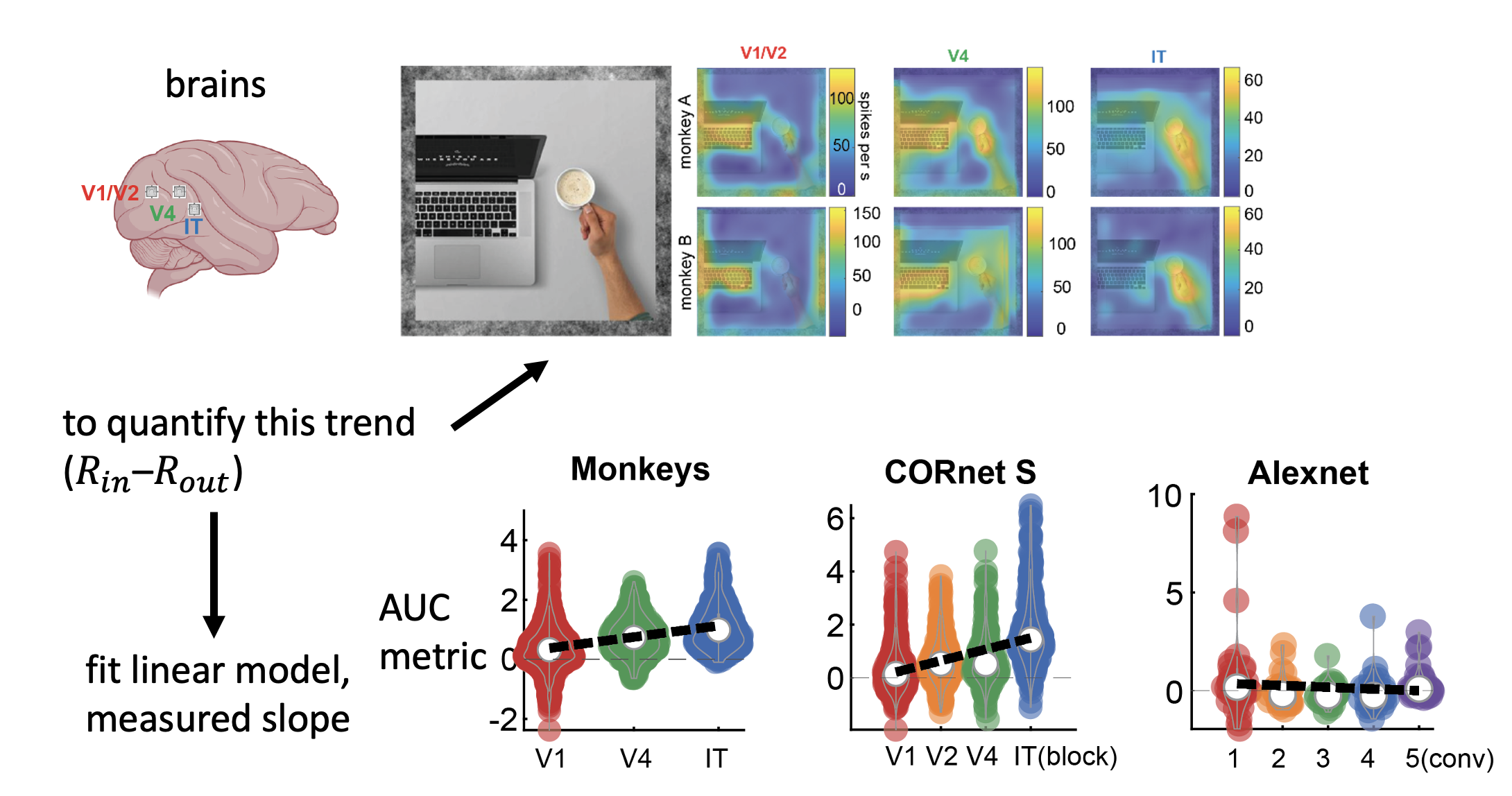

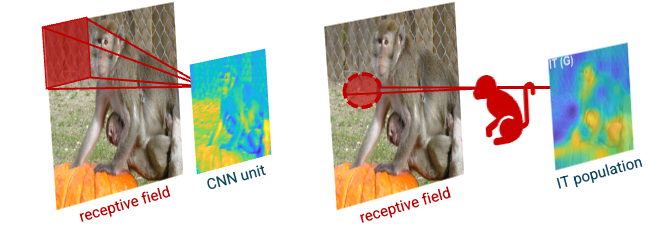

TL;DR: Heatmap recordings across V1/V2, V4, and PIT show monkey ventral-stream neurons preferentially encode local animal features over generic objects, with this trend strengthening up the visual hierarchy.

What are the fundamental principles that inform representation in the primate visual brain? While objects have become an intuitive framework for studying neurons in many parts of cortex, it is possible that neurons follow a more expressive organizational principle, such as encoding generic features present across textures, places, and objects. In this study, we used multielectrode arrays to record from neurons in the early (V1/V2), middle (V4), and later [posterior inferotemporal (PIT) cortex] areas across the visual hierarchy, estimating each neuron's local operation across natural scene via "heatmaps." We found that, while populations of neurons with foveal receptive fields across V1/V2, V4, and PIT responded over the full scene, they focused on salient subregions within object outlines. Notably, neurons preferentially encoded animal features rather than general objects, with this trend strengthening along the visual hierarchy. These results show that the monkey ventral stream is partially organized to encode local animal features over objects, even as early as primary visual cortex.

Nature

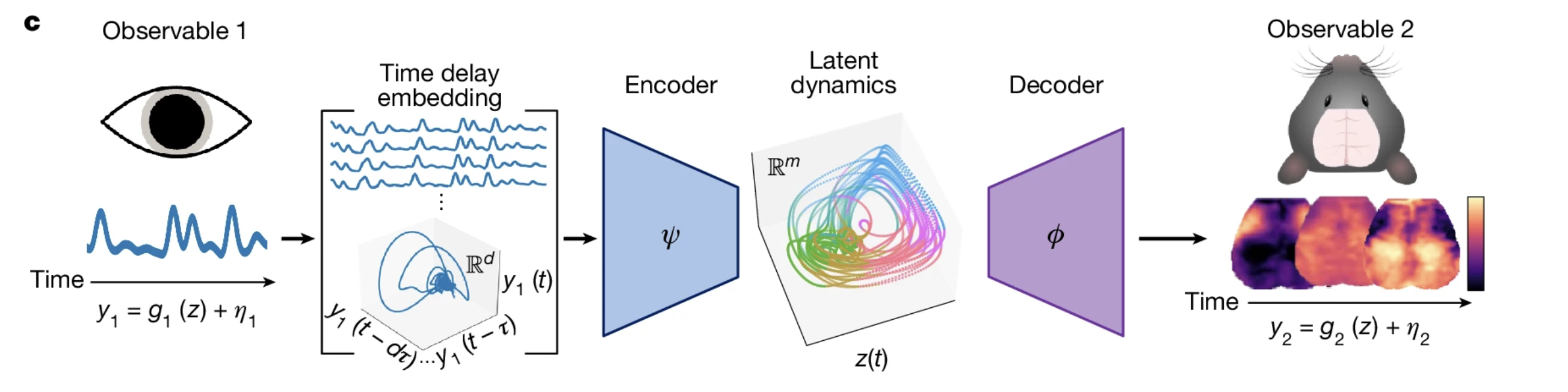

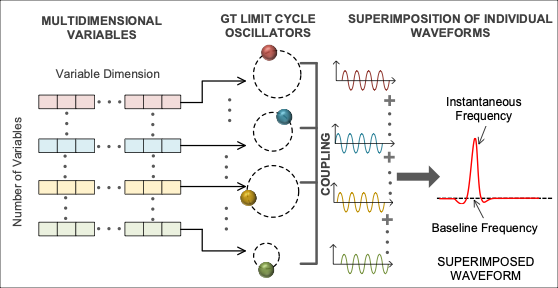

TL;DR: A single scalar measure of arousal (e.g., pupil diameter) suffices to reconstruct multimodal, brain-wide spatiotemporal physiology — reframing many "spontaneous" fluctuations as reflections of a unified arousal-related process.

Neural activity in awake organisms shows widespread and spatiotemporally diverse correlations with behavioral and physiological measurements. We propose that this covariation reflects in part the dynamics of a unified, arousal-related process that regulates brain-wide physiology on the timescale of seconds. Taken together with theoretical foundations in dynamical systems, this interpretation leads us to a surprising prediction: that a single, scalar measurement of arousal (e.g., pupil diameter) should suffice to reconstruct the continuous evolution of multimodal, spatiotemporal measurements of large-scale brain physiology. To test this hypothesis, we perform multimodal, cortex-wide optical imaging and behavioral monitoring in awake mice. We demonstrate that spatiotemporal measurements of neuronal calcium, metabolism, and blood-oxygen can be accurately and parsimoniously modeled from a low-dimensional state-space reconstructed from the time history of pupil diameter. Extending this framework to behavioral and electrophysiological measurements from the Allen Brain Observatory, we demonstrate the ability to integrate diverse experimental data into a unified generative model via mappings from an intrinsic arousal manifold. Our results support the hypothesis that spontaneous, spatially structured fluctuations in brain-wide physiology—widely interpreted to reflect regionally-specific neural communication—are in large part reflections of an arousal-related process. This enriched view of arousal dynamics has broad implications for interpreting observations of brain, body, and behavior as measured across modalities, contexts, and scales.

preprint on medRxiv

TL;DR: Computational ethology applied to free-moving open-field human behavior identifies fine-grained motifs that distinguish bipolar disorder from controls better than traditional ethological and psychiatric measures.

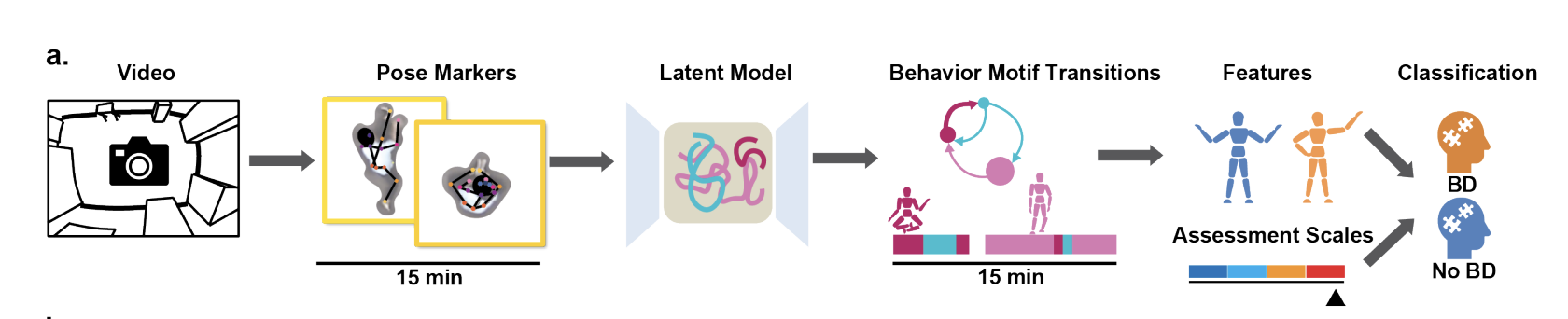

New technologies for the quantification of behavior have revolutionized animal studies in social, cognitive, and pharmacological neurosciences. However, comparable studies in understanding human behavior, especially in psychiatry, are lacking. In this study, we utilized data-driven machine learning to analyze natural, spontaneous open-field human behaviors from people with euthymic bipolar disorder (BD) and non-BD participants. Our computational paradigm identified representations of distinct sets of actions (motifs) that capture the physical activities of both groups of participants. We propose novel measures for quantifying dynamics, variability, and stereotypy in BD behaviors. These fine-grained behavioral features reflect patterns of cognitive functions of BD and better predict BD compared with traditional ethological and psychiatric measures and action recognition approaches. This research represents a significant computational advancement in human ethology, enabling the quantification of complex behaviors in real-world conditions and opening new avenues for characterizing neuropsychiatric conditions from behavior.

Accepted for Computational and Systems Neuroscience (COSYNE)

TL;DR: Unsupervised pose-based modeling of free-moving humans identifies behavioral motifs that classify bipolar disorder vs. healthy controls, outperforming CV models, expert annotation, and clinical scales.

Free-moving spontaneous behavior is the window to probe the brain and mind. Individuals with neuropsychiatric conditions such as bipolar disorder (BD) can exhibit distinctive patterns of behavior (McReynolds, 1962). Our objective was to quantify free-moving spontaneous human behavior in real-world contexts among euthymic BD individuals and differentiate them from a healthy control (HC) population based on these identified behavioral features. We analyzed videos of 25 BD patients and 25 HC participants freely moving in an unexplored room for 15 minutes (Young et al, 2007). Utilizing a key-point estimation toolbox (Mathis et al., 2018), we extracted human poses and represented them through a latent variable model (Luxem et al., 2022). Clustering the latent representations identified repeated behavioral motifs, revealing unique features of BD aligned with known clinical observations. Our approach outperformed CV models, expert human annotation, and even established clinical assessment scales in distinguishing BD from HC.

Accepted as a poster for Society for Neuroscience (SfN)

TL;DR: Animal-mask AUC values rise from V1 (0.19) to V4 (0.63) to IT (0.73), supporting animal-feature encoding as an organizing principle of the macaque ventral stream.

Macaque monkeys are foraging and social animals that spend a significant fraction of their time identifying conspecifics, classifying their actions, and avoiding threats from other animals. This suggests that in learning information from the visual world, many neurons of the monkey ventral stream might focus on encoding animal-based features. We discovered that animal masks identified regions with strong neuronal activity for IT better than they did for V4 and for V4 better than for V1 (AUC values, median ± SE; V1: 0.19 ± 0.01, V4: 0.63 ± 0.06, IT: 0.73 ± 0.08). Collectively, our results provide further evidence of an organizing principle of the monkey ventral stream — to encode information diagnostic of animals.

Accepted as a workshop presentation for Computational and Systems Neuroscience (COSYNE) 2022

Workshop presentation on representations in brains and neural networks.

Shape Recognition in Ultrasound with Deep Learning (2020)

Washington University in St. Louis, McKelvey School of Engineering, Department of Electrical and Systems Engineering Capstone Design Thesis

TL;DR: Pre-trained neural network recognizes geometric shapes in ultrasound images of 3D-printed samples with 96% accuracy; released a database for future ultrasound imaging research.

Ultrasound is one of the most common imaging techniques in clinical settings, but its functionalities are limited by its low resolution and high dependency on the operator skills. Here, we applied a pre-trained neural network to recognize geometric shapes in ultrasound images of 3D-printed samples. We achieved 96% task accuracy and created a database available for future research in ultrasound imaging.

Industry Research

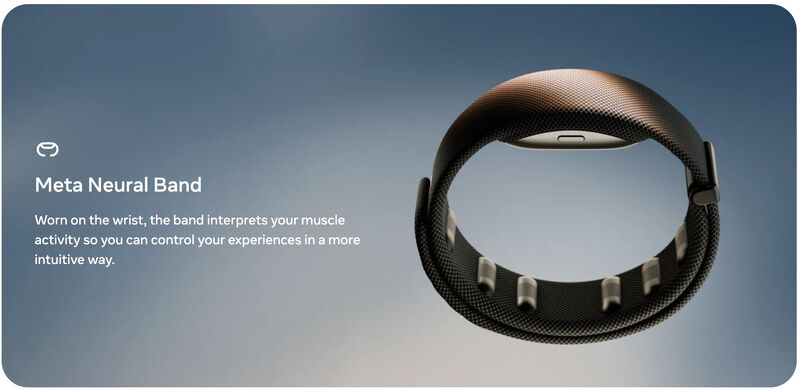

TL;DR: Built models that improved the robustness of a handwriting recognition system productionized in the Meta Neural Band; live demoed at Meta Connect 2025 and named one of TIME's Best Inventions of 2025.

Developed models to improve the robustness of a handwriting recognition system productionized in the Meta Neural Band, which captures electrical activity from subtle forearm muscle movements for intuitive, gesture-based input. This work was live demoed at Meta Connect 2025 and later recognized as one of TIME's Best Inventions of 2025.

TL;DR: Developed EMG–vision multimodal foundation models for Meta's neural wristbands, improving performance across multiple downstream tasks including pose estimation.

Developed EMG–vision multimodal foundation models for neural wristbands at Meta, improving performance across multiple downstream tasks such as pose estimation. The work explored joint representation learning between surface electromyography signals and synchronized vision streams to support generalization across users and tasks.

Undergraduate Projects

Embedded Systems: Closed-Loop Incubator with Remote Monitoring

Embedded Systems & IoT Project

Technologies: Arduino, Photon, Adafruit SI7021, Embedded Systems

Features:

Features:

- Plastic bottles: recycle and reuse

- Hardware: wires, resistors, fan, Adafruit SI7021, Photon, Arduino

- Embedded system: Sensors and Actuators + Feedback Control

- UI: graphical sliders and other information of the incubator

- Networking infrastructure: monitor and control the incubator remotely

Embedded Systems: Networked Garage Controller with Web UI

C++, JavaScript, HTML, Embedded Systems

Technologies: C++, JavaScript, HTML, Photon, Arduino

Features:

Features:

- Hardware: wires, resistors, buttons, LEDs, Two Photons

- Embedded system: Sensors and Actuators + Remote Control

- UI: responsive website and app

- Networking infrastructure: monitor and control the garage light and door remotely

- Functionality: Close the door and turn off the light automatically with the time set

Computer Vision Projects in Python

Computer Vision & Machine Learning

Technologies: Python, OpenCV, TensorFlow, PyTorch

Projects Include:

Projects Include:

- Edge detection + Line detection

- Image Restoration & Optimization

- Photometric Stereo

- Camera Projection and Transformations

- Estimation, Sampling, Robust Fitting

- Epipolar Geometry, Binocular Stereo

- Optical flow

- Neural Networks

- Semantic Vision Tasks

- GAN and VAEs. Unsupervised Learning

Object-Oriented Web App: Task Manager in React

React.js, Web Development

Technologies: React.js, JavaScript, HTML, CSS

Interactive web application for task management with modern React.js framework.

Interactive web application for task management with modern React.js framework.

Control Systems: 3R Manipulator Kinematics and Dynamics in MATLAB/Simulink

Matlab, Simulink, Robotics

Technologies: MATLAB, Simulink, Robotics Control Systems

Development of 3D rotational robotics control systems using MATLAB and Simulink for kinematic and dynamic analysis. [\[CODE\]](https://github.com/ZhanqiZhang66/3R-Robotics)

Development of 3D rotational robotics control systems using MATLAB and Simulink for kinematic and dynamic analysis. [\[CODE\]](https://github.com/ZhanqiZhang66/3R-Robotics)

Object-Oriented AI: Game-Playing Engine for Tic-Tac-Toe and Gomoku in C++

C++, Artificial Intelligence

Technologies: C++, AI Algorithms, Game Theory

Implementation of artificial intelligence algorithms for classic board games including tic-tac-toe and Gomoku with intelligent move prediction and strategy optimization. [\[CODE\]](https://github.com/ZhanqiZhang66/AI-Gomuku)

Implementation of artificial intelligence algorithms for classic board games including tic-tac-toe and Gomoku with intelligent move prediction and strategy optimization. [\[CODE\]](https://github.com/ZhanqiZhang66/AI-Gomuku)